In the 1982 film Firefox, a retired fighter pilot played by Clint Eastwood is summoned to steal a state-of-the-art stealth aircraft from the Soviet Union. The fictional MiG-31 Firefox also uses a helmet that can sense brain signals to manage the plane’s systems. “Remember, you must think in Russian,” says the Russian scientist collaborating with Eastwood’s character. The brain-machine interface (BMI), which may have seemed outlandish in 1982, is a good example of how science fiction can be prophetic: Prototype BMIs are a reality today.

No emerging technology is potentially more important to the military. BMI (also called brain-computer interface) allows a direct link between electrical signals in the brain and a computer that processes them to cause a machine to act. The prospective benefits should be obvious: A military thrives on expedient information flow.

Command and control that relies on machines can deliver fires and effects faster than an adversary that does not. A traditional human-machine interface clumsily links the commander to the human who pushes the button of the machine that conducts the fire. The pathway could potentially contain multiple humans and information systems before the commander’s thoughts reach the point of action. The brain-machine interface eliminates most of the pathway’s nodes, speeding execution. This preserves the most precious commodity in a military engagement, time.

Technology

Advances in neuroscience regularly unravel the mysteries of the human brain. The brain comprises nearly a hundred billion nerve cells called neurons, which transmit electricity through chemical reactions. In 1924, German physician Hans Berger invented a method of measuring these signals by placing silver electrodes on the scalp—the electroencephalogram (EEG).

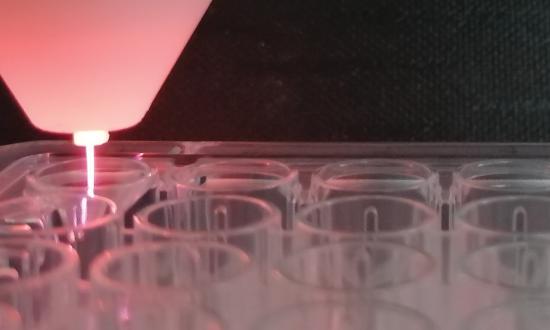

The technology has advanced; today, microelectrodes inserted into brain tissue measure the electricity in the intracellular space. With ever-more sensitive microelectrodes in more areas of the cortex, scientists can correlate the firing of cortical neurons with observed neurophysiological tasks and states. The BMI theory is that if the readings can be encoded into electrical signals, amplified, and sent to machine algorithms that can interpret the signal, a machine can conduct physical tasks corresponding to thought.1 Numerous examples exist, ranging from rats opening food dispensers and monkeys playing video games to humans operating prosthetics.

These advances have ushered in a commercial technological revolution to bring BMI to consumers. Most notable is Neuralink, one of whose aims is to improve the lives of paralysis patients by allowing them to access assistive devices with thought.2 Founded by Elon Musk, Neuralink recently demonstrated the wireless transmission of brain signals from microelectrodes inserted into a pig’s brain. Musk hopes that humans will eventually interface directly with devices by thought, eliminating the need for keyboards and touchscreens.

Nissan has been pursuing what it calls Brain-to-Vehicle technology (B2V) that could assess a driver’s intentions and speed up the execution of tasks to create a safer environment.3 And for gamers, NextMind (acquired last year by Snap) is developing a kit that consists of a non-invasive band intended to sense the visual cortex, allowing video game play to be controlled by looking at objects without external eye tracking.4 Numerous companies and startups are racing to get BMI technology to market.

Why

Imagine the responsibilities of a tactical action officer (TAO) in a combat information center (CIC). The TAO controls navigation, engineering, and weapon systems, which must be mastered to defend against all threats to the ship. If there is a ship’s compartment analogous to the brain and central nervous system, it is CIC. There, the sensors pipe in critical information from all systems, and the TAO interfaces with the weapon systems. A TAO in CIC needs to process large amounts of information, rapidly analyze it, and make quick decisions. If the ship’s “brain” were linked to a human brain, the TAO could interface directly with the ship, sense all information, and make the ship come to life through thought alone.

Consider the lethality of an antiship missile defense system. Lethality can be expressed in this way: Overall kill probability is a function of the probability of detection multiplied by the probability of a specific system’s ability to kill a target.5 Because of the multiplication, increasing either term’s value necessarily increases the overall kill probability.

A better performing interceptor, an improved technique (such as firing multiple interceptors), or both can increase the system’s capability. Increasing the probability of detection is more difficult: build a better system or improve command and control by reducing reaction time. A fully or semiautomated mode could accomplish this (with a human in the loop for safety). In a surface engagement in which supersonic and hypersonic weapons make milliseconds precious, BMI would improve reaction time and raise the probability of success. The advantages of a BMI extend to any time-critical task. A conning officer and helmsman, an air controller, or even Marines ashore in combat need expedient communications between each other and their equipment.

DARPA has been researching invasive electrodes for the past 20 years, but it has recently launched the Next-Generation Nonsurgical Neurotechnology (N3) program to develop noninvasive BMIs—wearable systems.6 In China, Tianjin University has touted research into a brain-controlled chip it calls “Brain Talker.”7 In Russia, researchers have developed a sophisticated neural interface called “Balalaika” that combines multiple types of EEG monitoring to interpret the brain’s planning of physical tasks.8 Each of these evolving systems has implications for warfighters.

Ethics

As these technologies improve, it is important to consider drawbacks of BMI that might counsel against its use. These are not so much technological as ethical. According to Philip Taraska, an ethicist and former U.S. Army Green Beret, four moral criteria must be considered when introducing human enhancement: reversibility or nonpermanence of the enhancement; upholding existing military values; voluntary informed consent free of coercion; and the prior exhaustion of alternatives to human enhancement.9 Taraska’s doctoral dissertation provides a thorough discussion of how these criteria apply to BMI while acknowledging that BMI could pass these moral criteria.10

Taraska’s argument applies the criteria to the U.S. military. But they may not apply equally or at all to potential U.S. adversaries, creating a disadvantage. For example, consider reversibility and consent. If invasive interfaces—electrodes inserted into a brain—were the only viable options for military application, then reversibility would be in question, even if a service member gave consent, an obstacle for the U.S. armed forces. On the other hand, adversaries who are not especially interested in individual rights would probably not see inserting electrodes into brains as a concern. More important to China and Russia would be the military advantages to be gained rather than the well-being of troops (as the war in Ukraine shows). A fleet of ships and drones employing BMI technology will have a tactical advantage against a fleet without it. Without a noninvasive (reversible) BMI system for the U.S. military, its adversaries may have a first-mover advantage.

Future

Anyone who holds a security clearance is reminded in annual training that classified material can be handled in three places: an accredited secure space approved for the classification level; an authorized government information system; or hand-carried by a cleared person using appropriate security protocols. Another critical place in which we store classified material is often overlooked: our brains.

BMI raises questions about what such technology might mean for this information. What if electrode technology improves so much that complete mappings of all neurons across the brain can be associated with memories? Can a person’s BMI be hacked to extract information from a mind? Could someone be interrogated merely by hooking them up to a BMI? These questions may seem outlandish today, but in the future, human minds could cease to be any more secure than computers.

Whatever the long-term future holds, BMI is on the cusp of being a reality for the warfighter today. The next ten years will see military BMI technology spread. The United States will be at the forefront of advanced BMI technology, but ethical questions could impede military adoption.

Tomorrow’s victors may well be characterized by who employs BMI technology and who does not, with a significant advantage for the former. Imagine swaths of unmanned surface and underwater vehicles being controlled by the thoughts of talented operators working lethally from the safe confines of a fortified base with no risk to person or team. Proponents of Offense-Defense theory would find this unsettling. However controversial military BMI becomes, it will be the culmination of the information age, accelerating information beyond what can be fathomed today. For this reason, BMI should be a major pursuit of the U.S. Navy.

1. Miguel Nicolelis, Beyond Boundaries (New York: Times Books, 2011), 123.

2. Neuralink, neuralink.com/applications/.

3. Sukesh Mudrakola, “Nissan’s Brain-to-Vehicle Technology: Cars That Can Read Your Mind,” techgenix.com, 15 March 2018.

4. “Welcome NextMind!” newsroom.snap.com, 23 March 2022.

5. Debasis Dutta, “Probabilistic Analysis of Anti-Ship Missile Defence,” Defence Science Journal 64, No. 2 (March 2014): 123–29.

6. DARPA, “Six Paths to the Nonsurgical Future of Brain-Machine Interfaces,” 20 May 2019.

7. Rajesh Uppal, “Countries Race to Develop Brain Computer Interface (BCI) Technology to Reduce Soldier’s Cognitive Load and Enable Controlling Multiple Military Robots with The Speed of Thought,” International Defense, Security, & Technology, 18 January 2021.

8. Uppal, “Countries Race to Develop.”

9. Philip A. Taraska, How Can the Use of Human Enhancement Technologies in the Military Be Ethically Assessed? (Pittsburgh, PA: Duquesne University Electronic Thesis and Dissertations, 2017), 200.

10. Taraska, How Can the Use of Human Enhancement, 231–37.